People are comparing concerns about AI to those about the mechanical calculator; here’s why that’s wrong

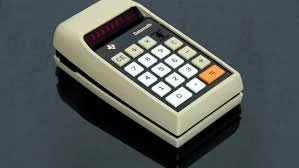

It was around 1970 when handheld calculators as we know them today were introduced to the United States. Of course, “counting machines” like the Mesopotamian Abacus have existed for thousands of years. Yet, when mechanical calculators were introduced, it caused a wave of fear and concern. Parents, teachers, and scholars alike were afraid that the use of mechanical calculators was going to prevent students from being able to do basic math. They feared that this technology would “inhibit a student's ability to grasp the concepts behind basic math problems.” Sound familiar?

On the surface, this seems an apt comparison for modern-day concerns for AI. 1) There was a new tech introduced, 2) people were concerned, 3) it happened anyway, 4) they were forced to adapt. But after the wide adoption of mechanical calculators, it didn't actually end up affecting students’ ability to understand basic math skills. But the issue here is that using LLMs does negatively affect your cognitive abilities. Whereas there wasn’t proof for mechanical calculators, there is already proof of decline for LLM usage.

According to an MIT study, people’s cognitive activity was negatively impacted after using an LLM to write an essay, and their vocabulary stemmed more “generic”. “While these tools offer opportunities, their potential impact on cognitive development, critical thinking, and intellectual independence demands a very careful consideration and continued research.” (Kosmyna et al, 143.) That is already concerning; even more concerning is a study from The Lancet where, after 3 months of AI-assisted endoscopies, endoscopists showed prominent “deskilling” risk. (Budzyń, Romańczyk, et al. via The Lancet. )

This means there was a significant decrease in an endoscopist’s ability to detect pre-cancerous polyps in their patients without the tool. This is not a student using a calculator to do an intense problem; this is a specialist losing the ability to do the most important aspect of their job. It’s hard to compare a device update to AI when the device has a blueprint that’s older than the invention of paper. It’s even harder when you're comparing something with a singular battery to something that requires massive data centers, water, and electricity to both run and cool down. Harder still would it be to compare a singular device that executes math functions to something touted and, most importantly, sold as the fix-all and do-it-all. This was a singular device with a function that covers a singular subject: mathematics. Machine learning and predictive text have been around longer than our current definition of “AI.” Yet, that pales in comparison to the 4,000-year history of counting machines and the extensive history of teaching children basic math before they even touch a calculator. We’re taught basic math skills as early as kindergarten, with the basics drilled into us from then onward.

With more advanced calculators becoming available after the 1980s, they allowed us to do more complex math. However, calculators had a lane and stayed in it. People weren’t positioning calculators as the fix for the climate crisis, while pretending not to know that calculators were actively making the climate crisis worse. GenAI is being positioned as the solution not only for the climate but also for script writing, video-editing, cancer finding, image screening, surveillance-taking, meeting-attending, email-writing, teaching, government processes, and millions more examples. Comparing fears about the calculator to fears about AI is like comparing an apple to a multi-hundred-acre industrial farm that has billions of dollars in funds and sells everything from apples and other produce to fake designer bags, furniture, electronics, drugs, houses, medical devices, organs, rockets, and whole countries. Calculators were never sold as a fix-all to problems that we already have the solutions to. And they were never sold as a way to avoid holding responsible parties accountable for their destruction of the planet.

If anything, this comparison serves as a minute example of people stepping in to make sure a new technology didn't get out of hand. What if students were tested on media literacy, critical thought, recall, and the ability to detect sycophancy in something that is mirroring human language, but is not human? If the recently unfunded Department of Education could instill guardrails to ensure that students still retained the ability to fully comprehend basics, we might be better off.

If you take a journey around the internet, you’ll hear people say things like, “If people use it to be lazy and stupid, then that’s their prerogative.” Sure, but that sounds very “every child left behind” to me. It’s bereft of compassion and feels like we’re pulling up the drawbridge behind us.

As much as I also want to say, “if you want to use GPT to write a generic, repetitive, and off-sounding essay riddled with m-dashes and multiple lists of three adjectives, go for it,” I’m not interested in blocking people out. I want them to come in, I want to meet them on the drawbridge and say, “Listen, do what you want, but if you're gonna do this, know the risks.” I want to give young minds, especially, the ability to fully understand what they’re getting into.

It reminds me of this time an investigator came into my elementary school to talk to us about strangers. He said, “What would you do if someone you didn't know came up to you at school and said I know you're very good with animals, can you come with me and help me with my dog? Would you go?” And I remember as a child nodding my head, yes, I was very good with animals. And then, wide-eyed, fervently shaking my head no as he informed us that it was a trap. I didn't know as a kid that there were people out there who wanted to hurt us. But the schools brought someone in to talk to us about predators, and then in junior high, as Facebook gained popularity for the first time, they talked to us about the risks of sharing public information on the site. Yet Facebook has still destroyed lives.

The AI-defenders will say things like, “They said the same thing about TV and social media.” Yes, and our attention spans are getting shorter, parents are putting iPads in front of their kids when parenting gets tough, and social media and phone companies have tricked our psychology to make us glued to our phones. I’m not sure it’s a great thing, but regardless, we have to know and understand that these things can harm us.

There's so much that this “catch-all” technology is sold to us as a “fix”. But it’s not meant to fix everything. The challenges it can “fix,” like loneliness, are necessary hurdles for us to overcome to continue growing. Some of these problems are because we’re lacking human connection, not because we need to prompt our LLM therapist. AI is a tool that's here whether we like it or not, yes, but it’s a tool that needs guardrails, regulation (not by a couple of players), and not to be treated as a human. It is in every way unethical and harmful. From the centers it’s powered by to the people pushing it to the gig-workers it exploits while pretending to be self-sufficient. It is not good, it is harming people, and will continue to. We can't be fooled by the marketing of AI. AI doesn't belong in art or expression. If it’s a tool needed in post for editing, sure, but AI “art” strips us of human expression. AI writing strips us of our voice, vocabulary, and flavor. AI-made videos are shitty and lack beauty and depth. However, I’m glad it’s used to run through millions of points of code in seconds, to help disabled people through assistive technology. It’s cool that it’s used to show some radiologists areas of the X-ray they may want to check on, but I’m very thankful for the radiologists who check for things AI might’ve missed anyway. Because that is their job. And because AI can’t help being wrong most of the time. If a student doesn’t check the answer on a calculator to see if it makes sense, they get the answer wrong. If medical professionals miss things that AI didn’t catch, people can die. That’s a big difference. To understand and appreciate this technology, we must not view it as an infallible savior, but as a tool that's being oversold, does a couple of things well, and shouldn’t be used for others. A tool that has and will require constant oversight. If you like AI, you must hold it accountable; we only have this one life, this one planet, let's not make it worse.

Sources:

https://files.eric.ed.gov/fulltext/ED525547.pdf

https://arxiv.org/pdf/2506.08872v1

https://www.thelancet.com/journals/langas/article/PIIS2468-1253(25)00133-5/abstract